Have you ever played the game Guess Who?

If not, here’s how it works: each player sees 24 pictures of different characters. You pick a card, which matches one of the pictures and names. You then ask simple yes/no questions to your opponent, like “does your person wear glasses?” and eliminate all those that don’t fit the bill. It’s a pretty straightforward process of elimination. If you combine a few different signifiers to make the questions more complex – for example, you ask “does your person wear glasses or a hat or have blond hair?” – you can get down to just three options with only four questions. By your fifth question, you have a 50/50 chance of correctly identifying the mystery person, based on a handful of characteristics.

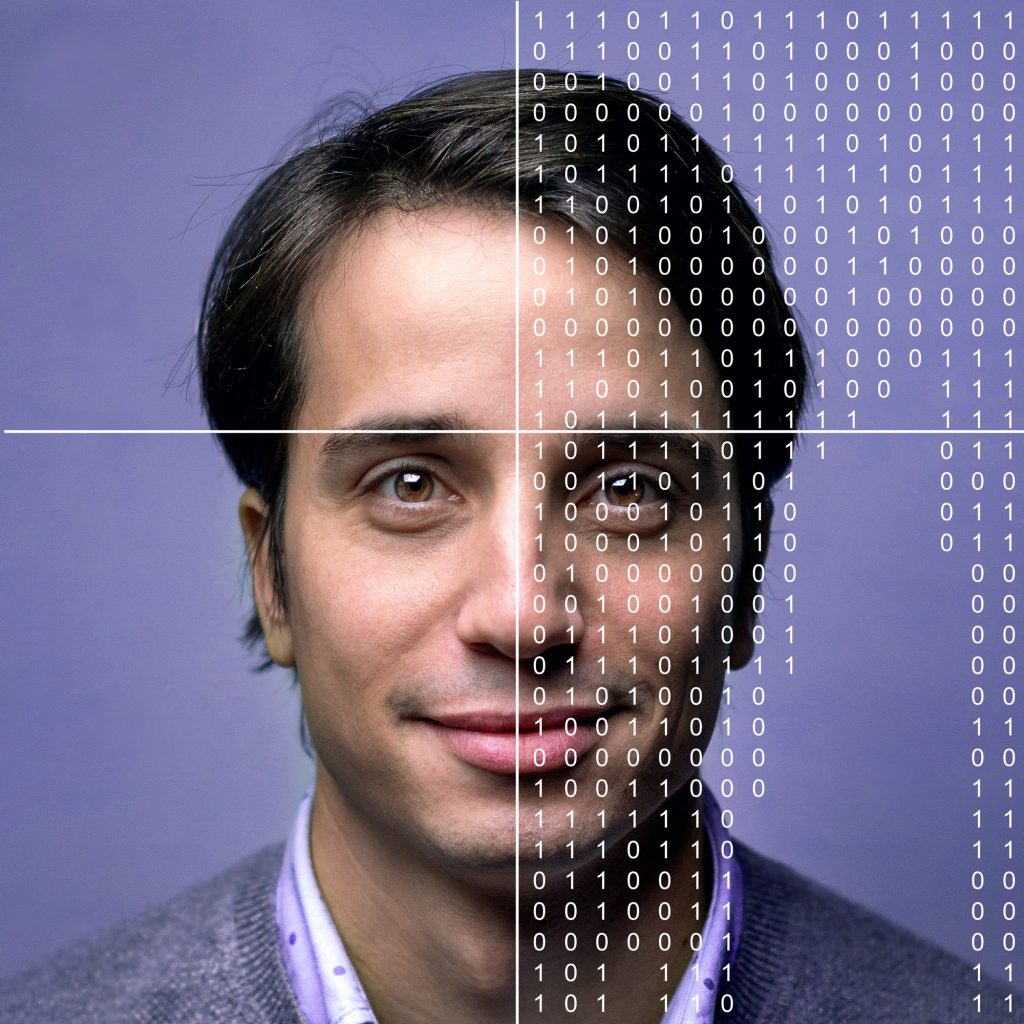

Similarly, when you’re working with real-life datasets, it takes astoundingly few scraps of information to figure out who the real person underneath the data is. Even when all personally-identifying information has been removed.

Worse, hackers and other unscrupulous actors out there aren’t just asking a few simple questions and using a process of elimination. They have powerful algorithmic tools at their disposal to match millions of anonymized data points with directories of real people in the blink of an eye. Or even to reverse engineer more sophisticated approaches, like randomized perturbation.

In a bid to prevent accidental breaches of sensitive data, organizations are turning to all kinds of elaborate privacy-enhancing technologies. These include cryptography-based approaches such as homomorphic encryption and Secure Multi-Party Computation (SMPC), as well as Federated Machine Learning (FML). This releases different parts of a dataset to different groups of users, who can then use their segment to train machine learning models, before combining them all at the end.

But as these technologies get smarter and more innovative, so do cybercriminals looking for a chink in the armor. And privacy and security regulations around the world are getting more stringent all the time. Faced with pressures of complying with General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and/or the Health Insurance Portability and Accountability Act (HIPAA), many organizations are spooked into locking their data away entirely, rather than take any risks with their customers’ privacy.

But what if it didn’t matter if you could join all the dots back together? What if, like Guess Who?, the characters at the root of the datasets were entirely fictional?

This is the basic principle of synthetic data. The synthetic dataset is generated based on a real one, so it contains all the same insights and value of the underlying one. But in this dataset, the individual people don’t exist. The data points don’t lead back to any real person who can become a victim of privacy breaches or identity theft.

Can you imagine the relief it would offer to be able to use, scale and collaborate on these datasets freely, without having to worry about privacy preservation at all? To know that you can’t fall foul of your data protection obligations because there’s no one in this equation to protect?

Preventing real identities from being exposed is a losing battle, especially when you start combining datasets, or exploring and manipulating these datasets in interesting ways. Far better to remove the problem entirely, by making privacy a non-issue. After all, the only way to truly ensure your data-driven activities pose no risk to individual people is to make sure that there are no real people to be exposed in the first place.

Find out more about how synthetic data preserves the privacy of your datasets (and how it stacks up against other approaches) in this in-depth article >